Agents Are (Finally) Real: An Explainer for CROs

AI agent hype becomes reality after a frantic few weeks.

For two years now, AI agents have been the next big thing in GTM. AI SDRs were all the rage. Back in 2024, Marc Benioff was talking up Salesforce as the “largest supplier of digital labor […] all powered by these autonomous AI agents”. The reality didn’t come anywhere near to matching the hype.

In the first 6 weeks of 2026, that all changed.

There were glimmers in late 2025, but we weren’t quite there yet. This week, however, I’ve had multiple conversations with other CEOs and sales leaders that all came down to the same thing: the agent reality has caught up to the hype.

In December 2024, I wrote an article titled “Are You Behind in AI?”. True to Betteridge’s Law, the answer was “no”. Fourteen months later, the answer is almost certainly a resounding “yes”.

I can’t over-stress this: there’s a sense of near-panic FOMO. Every minute spent not taking advantage of these agent capabilities feels like a massive wasted opportunity. One CEO told me he’s working 18 hour days to get his agent system off the ground. I’ve certainly felt it myself. Hell, I learned today I have to break down and get glasses because my 45-year-old eyes can’t look at a terminal for 10 hours straight anymore.

In this article, I’ll do my best to share what’s changed and how this new crop of agents work. I’ll try to do it with a minimum of jargon and technical detail. If you’re not yet taking advantage, I hope you take this knowledge and light a fire under your RevOps leaders ASAP.

Now, let’s see how some programs that read and write text files on your computer while looking like a 1980s DOS game are changing the world.

The context

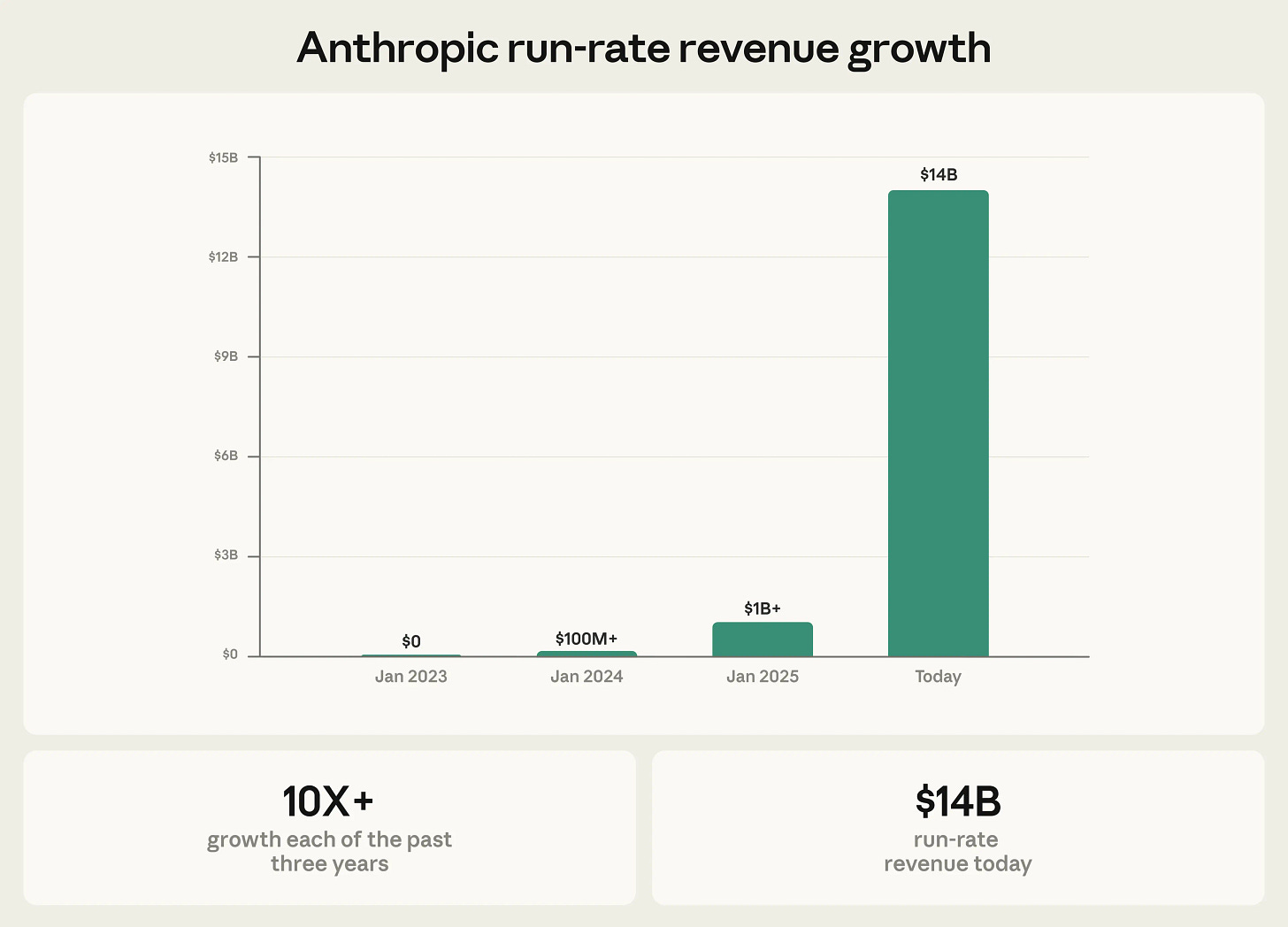

Anthropic last Thursday raised $30B at a $380B valuation. Their announcement included this chart:

Why yes, they did go from a $1B to a $14B run rate in less than 14 months (Jan 2025 - Feb 2026). As Jason Lemkin points out1, Salesforce took 10 years (2009 - 2019) to do roughly the same thing. A big portion of this growth comes from one product: Claude Code. Released in May of 2025, Claude Code itself is at a $2.5B run rate that’s accelerating like crazy. Per Anthropic’s announcement:

Claude Code represents a new era of agentic coding, fundamentally changing how teams build software. Claude Code was made available to the general public in May 2025. Today, Claude Code’s run-rate revenue has grown to over $2.5 billion; this figure has more than doubled since the beginning of 2026. The number of weekly active Claude Code users has also doubled since January 1. A recent analysis estimated that 4% of all GitHub public commits worldwide were being authored by Claude Code—double the percentage from just one month prior.

Business subscriptions to Claude Code have quadrupled since the start of 2026, and enterprise use has grown to represent over half of all Claude Code revenue. The same capabilities that make Claude exceptional for coding are also unlocking other new categories of work: financial and data analysis, sales, cybersecurity, scientific discovery, and beyond.

This growth has coincided with software stocks losing $1T in market value over the past few weeks. The thesis is that Claude Code is so powerful that people won’t buy software anymore—they’ll just build whatever they need.2 So what does a new tool for building software have to do with GTM?

I’ve spent a good part of my life as a software engineer. I care deeply about the craft of writing code—so much so that I named my daughter after the first programmer. After my first hour with Claude Code earlier this year, I realized something: I’ll never personally write code again. After another hour with Claude Code, I realized something else: I’ll never do any work involving a computer the same way again.

The coding is a trojan horse. Claude Code may look like a coding tool, but it’s actually the first truly capable general-purpose AI agent suitable for businesses use.

Anthropic knows this. It’s why they shipped Cowork, which is really just a wrapper around Code for non-developers. OpenAI knows this. It’s why they shipped Codex and paid big bucks to win the bidding war for the OpenClaw guy.

Many of us in GTM got our first impression of AI “agents” from the early attempts at AI SDRs. These were mostly just spam cannons that could generate email at scale that varied somewhere from “halfway decent” to “incredibly embarrassing”. Needless to say they didn’t work.

By the time I wrote my 2025 post about agents, there was a little more nuance. However, that post now looks comically quaint. If your idea of an “agent” in 2026 is a an inept AI SDR, a chatbot that you can talk to, a custom GPT that can summarize a few files or an n8n workflow that uses an LLM for a couple steps, the world has moved on.

After 2+ years of “agents” being an AI buzzword, they’ve finally arrived. They just don’t look quite like most people imagined even a few months ago.

How agents actually work

These breakthrough agents aren’t cloud-based software with drag-and-drop workflow builders. They’re just programs that you install on your computer. They do useful things by thinking about stuff, reading stuff, writing stuff and running other programs—not unlike how you and I get work done. When they need to think about what to do, they send data to an AI model running in the cloud like Claude or an OpenAI GPT.

They differ from the “normal” software you’re used to in 4 key ways:

They’re text-based programs3 that run in a terminal. While most of them also have a graphical UI, they’re most powerful in their native form. If you’re old enough, you might remember text-based DOS programs. They’re like that. This gives them their hacker-y feel.

They can run other programs. There’s a whole ecosystem of utility programs on our computers that do all kinds of useful stuff that most of us never see—things like searching files, editing files, fetching web pages, interacting with other software, etc. They’re all there as text-based commands available inside the aforementioned terminal.

They can write their own custom programs. Want to analyze a complicated spreadsheet? The agent can use the AI model to write specialized code on demand which it can then run just for that purpose.

They “know” specific stuff (like how to do a task) and “remember” things by reading and writing to simple text files4. You can give them specialized knowledge just by explaining it in a text file.

The thing that makes this special is gluing it all together with AI and running it over and over again in a loop.

The “agentic loop”

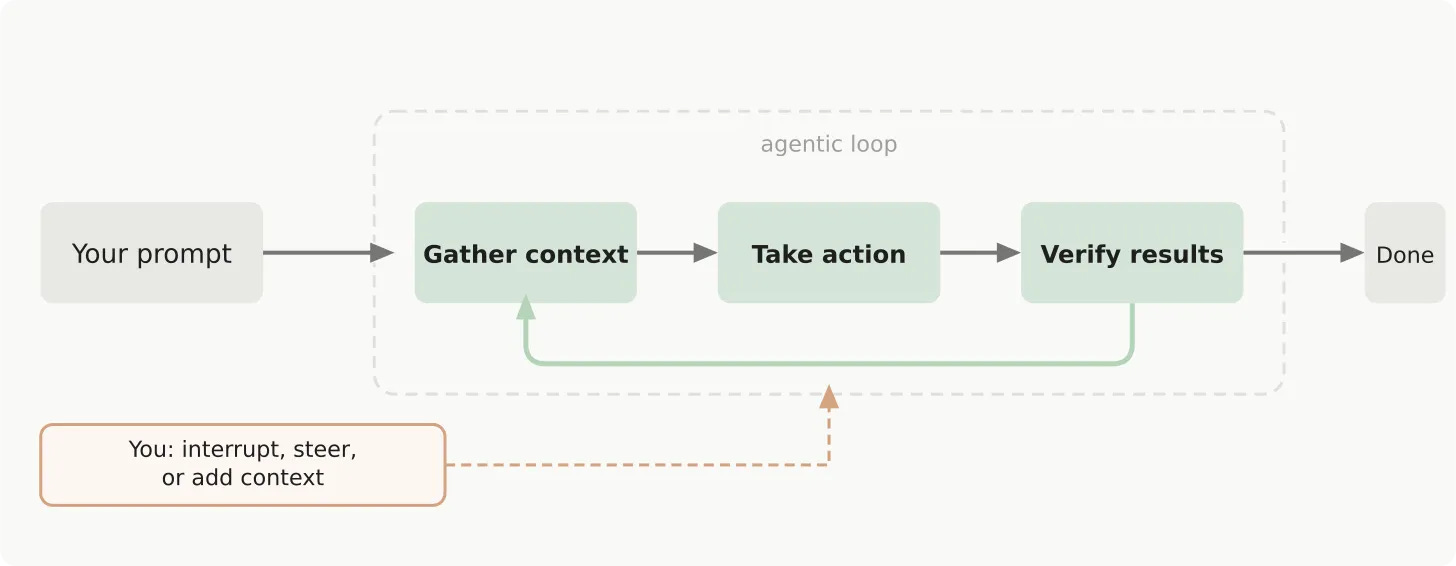

Agents basically are a loop. They start with some context you provide and a task you ask it to do. From there the AI decides what to do next. That might involve reading an email, writing a program, checking LinkedIn, sending a Slack or searching the web for information. It then takes the output of that step and feeds it back into the AI again… and again until it decides it’s done5.

This agentic loop looks like this:

This simple construct is where the magic happens. Anthropic, OpenAI and others have invested mind-boggling resources into building models that are very, very good at handling these kinds of loops. They follow instructions, remember important details, make good decisions, even catch and correct their own mistakes.

It really wasn’t until November of 2025 that models got good enough at running an agentic loop. Now they’re really good. They think through problems, make plans, and (almost always) do what the user asks.

Better models are the primary reason agents have caught up to the hype—but they’re not the only one. All this AI doesn’t add up to an agent if it can’t do actual useful work. There are three other (comparatively simple) pieces that complete the puzzle: tools, skills and memory.

Tools

I mentioned before that agents can run programs (both existing ones and ones they write). They can also read files, write files, search the web, run other agents or talk to other systems. You can think of these actions as “tools” the agent can use.

For example, you could tell an agent to read a list of prospects in Excel and update each row with their city. It might read the list from an Excel file (tool) that contains the list, use Chrome to find each prospect on LinkedIn (tool), read their bio to find the location (tool), and then write the data back to Excel (tool).

The key advancement here is that agents make it easy to plug in new tools that can do different things or access different data. That might be an MCP that accesses HubSpot or the ability to call an enrichment API. This makes a generic agent able to access the capabilities and data necessary to do your specific tasks. Think of it like giving a new employee the logins they need to the various systems they’ll use to do their job.

Just like with a new employee, though, having the login to the CRM isn’t enough. They need to now how to do things your way. That’s where skills come in.

Skills

Tools give agents the ability to take specific actions. They’re pretty good at guessing what actions to take based on the task at hand—not unlike a reasonably eager and intelligent employee. Often, however, they need more guidance and context on how to get an entire job done using their available tools. That’s where skills come in.

Skills are remarkably simple. They’re basically just text files that describe how to do something. For example, Claude’s ability to work with Excel mostly stems from the instructions written up in this file right here. When you ask Claude to do something in Excel, it looks for relevant skills, finds this file, reads the instructions and then gets to work.

Like tools, the power comes when you write your own skills (or download existing ones) for your specific needs. This makes it easy to extend these agents with new capabilities for your specific needs.

Memory

Memory sounds much fancier than it is. Like everything else here, we’re basically talking about text files. These simply give the agent context about the job it’s trying to do. This is somewhat analogous to onboarding and coaching.

For example, an SDR agent would need to know about the company it works for, messaging and positioning, ICP and specific information about the product its selling. This gives it the context to properly create messaging for a prospect. This might live in a text file (or files) that the agent can access and serve as a kind of onboarding document.

Over time, you might correct the agent’s behavior (e.g. “handle objection X with response Y instead of Z”) and ask it to update its memory text files with what it learns. Next time it’ll have that information handy and do things differently.

Wrapping up

The dam has broken. The Rubicon has been crossed. There’s a disturbance in the Force. Something big is is happening.

After years of hype, AI agents are really here. Claude Code was the first to find the right mix of model quality, data access and extensibility. OpenAI followed suit. OpenClaw has as well, in a much more chaotic way. More will follow.

Don’t let the weird terminal UIs and text files intimidate you. These agents can do way more than code—and smart operators are already putting them to use for GTM. There are plenty of times when the hype is fake and the FOMO’s not worth it. This isn’t one of those times. Get to work or fall behind.

Jason actually says 17 years. That’s wildly inaccurate, but the gist is the same.

There are reasons to view this thesis as overdone, but it’s honestly hard to say by how much at this point.

Both Claude Code/Cowork and OpenAI Codex offer more graphical UIs as well.

Usually formatted as Markdown, which is just a very simple way of specifying headings, bullets and other basic formatting without requiring a full-on editor.

Or until it hits a certain number of “turns”.