The Composable GTM Ecosystem, Part 2

It's a workflow world. We just live in it.

Last week, in Part 1, I wrote that the CRM-centric model of the GTM technology ecosystem is under threat. The CRM represents a compromise, one that combines GTM data, workflows and user interface into a single product. Until recently, those things were so intertwined that the only way to do any one of them effectively was to tightly couple all of them together.

That compromise may no longer be necessary. If technology (AI or otherwise) increasingly does jobs performed by humans today, it removes the final thing holding CRM-centrism together: the requirement to always have a human in the loop.

This creates an opening to compose a solution from best-of-breed components. We’ve already seen this happen in GTM-adjacent markets like e-commerce and content management.

But how are teams actually composing these solutions? That’s the job of the orchestration layer.

The emerging orchestration layer

If you live in certain corners of LinkedIn, you’ve likely seen comment-bait workflow screenshot posts or “20 tools I used to generate a gazillion dollars in pipeline” posts. While the posts are clickbait, the concept is real.

Smart technically-inclined GTM folks really are composing increasingly powerful systems by orchestrating data and process flows that combine specialized services. To do this, they’re tapping into the rapidly evolving GTM orchestration layer: the part of the tech stack that connects all the individual vendor tools into holistic workflows that do something valuable.

Coincidentally, this layer is where most of the value in the GTM technology ecosystem is currently accruing. Clay, the most well-known player in this space, just allowed employees to sell stock at a $5B valuation. Another contender, n8n, just quietly raised at $2.5B. Needless to say, it’s an interesting1 time to be an orchestrator.

The rest of this piece lays out the 3 major orchestration layer models and speculates (wildly) on how this might all evolve for those of us trying to build GTM systems. Here are the 3 models:

Low-Code - drag-and-drop workflow builders that allow users to craft processes using building blocks for logic and integrations

Aggregated - a special case of low-code workflow builder and data marketplace largely represented by one player: Clay

Agentic - AI that, given a directive, can connect with systems and achieve goals on a user’s behalf

Let’s look at each.

Low-code workflows

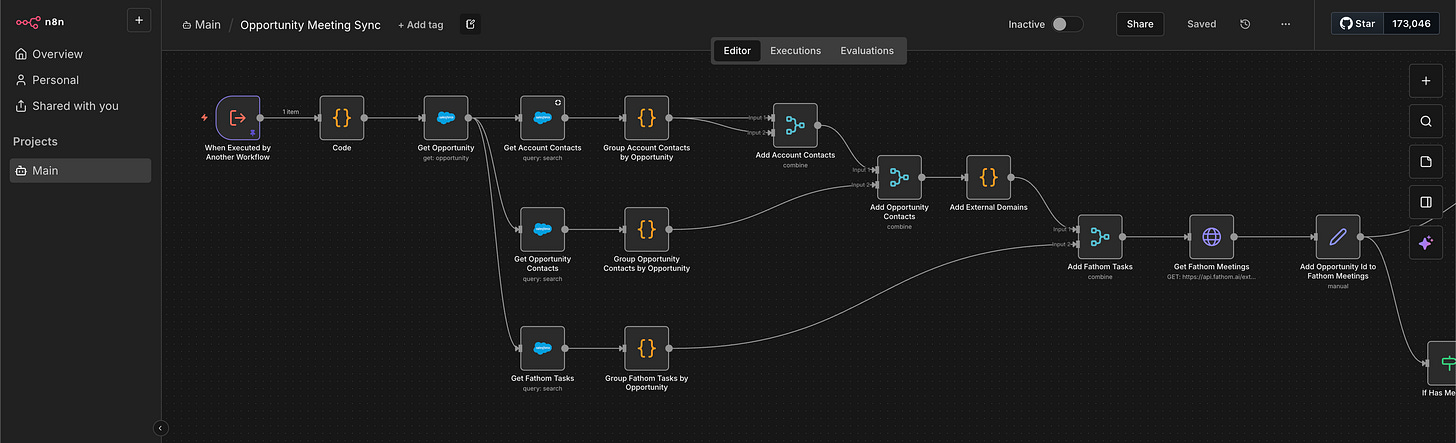

Low-code (or no-code) workflow tools provide a graphical user interface that makes it easy to connect systems together. Zapier, founded in 2011, was an early example. Make is another. Lately, n8n has emerged as the darling of more technically inclined users.

The idea is you can drag-and-drop integrated connectors and logic together to do things like update a CRM record when a form is submitted on your website—without having to know how to write code to connect those tools together via APIs.

These tools typically offer massive libraries of pre-built integrations—most of which serve use cases that have nothing specifically to do with GTM. Zapier boasts more than 10,000 while n8n has more than 1,000. They also allow for extending integrated capabilities by providing lower-level building blocks that can access any available API.

While their foundations are in traditional drag-and-drop style workflows, these tools have recast themselves as systems for building AI agents. It’s a pretty natural fit because they make it relatively easy to access the data and systems that agents need to do interesting things.

What they don’t typically do is act as a marketplace for the tools they integrate with. You don’t pay Zapier or n8n for access to the tools and data their workflows integrate with—it’s up to you to sign up directly with those vendors and then “bring your own key” to enable workflow access. The workflow platforms themselves make money with a usage-based model that charges per step or per execution.

One player in the low-code workflow space, however, takes a different approach.

Aggregated workflows

Ben Thompson, tech industry analyst and publisher of the popular Stratechery newsletter, has written extensively about what he calls Aggregation Theory which describes how platforms work in the internet era.

He starts by defining traditional modes of competition that apply to any market:

The value chain for any given consumer market is divided into three parts: suppliers, distributors, and consumers/users. The best way to make outsize profits in any of these markets is to either gain a horizontal monopoly in one of the three parts or to integrate two of the parts such that you have a competitive advantage in delivering a vertical solution.

Aggregation Theory posits that it used to make the most competitive sense to integrate scarce supply with distribution (e.g. newspapers integrated editorial content—with ads—and physical delivery networks). But, in the internet era, it’s more profitable to integrate user experience with distribution so you control demand for an otherwise abundant supply (e.g. Google gets an infinite supply of individual pages from the whole web which they serve up—with ads—to their users).

Historically a lot of GTM tech—especially in the data enrichment space—worked like the newspapers. ZoomInfo built a massive business and contact database (supply) which they integrated with a sales engine (distribution). They bragged about the billions of data points that they’d mined, cleaned and structured. Other data providers did the same. Everyone competed on the size and quality of their proprietary supply of data, not the user experience of using that data.

Clay came along and flipped it all around. They built the distribution mechanism and competed on user experience while selling easy access to myriad data suppliers—both via direct integrations and “bring your own key” API calls. If the user chooses the convenience of direct integration, they pay Clay some “credits” for each piece of data they retrieve. That revenue then gets split with the integrated partner.

That model let Clay tout access to all kinds of data without being responsible for the data itself. They recognized that the data was a commodity with many willing suppliers. They could compete on the integrated experience, control demand to get favorable terms from suppliers and disrupt the supply-integrated companies like ZoomInfo.

In fact, if one supplier gives bad data, the best practice is to try another one, paying Clay more credits in the process. Not only do they not get blamed for bad data, they actually make more money! That’s a neat trick.

From this foundation, Clay has rapidly expanded into a unique kind of workflow platform that combines a Stratechery-style Aggregator with the other features found in the low-code workflow tools. They also caught the AI wave early with their “Claygent” feature to scrape data from the web.

So is Clay the future of GTM orchestration? Maybe not.

Agentic workflows

Now we get to what may well be the final boss of workflow technology: agents.

Agents use AI to do the work for you. You tell them what you want done and they go figure out how to make it happen—making decisions, accessing data and using various tools along the way. This differs from the workflow tools we’ve described so far which require step-by-step instructions spelled out for each specific task.

It all sounds great, but we’re about 2 years into agent hype without a whole lot of working agents to show for it. There’s reason to be believe, however, that agents might finally be coming into their own.

Over the past couple months Claude Code has broken containment from a developer tool to something that non-coders are exploring. I can personally attest to Claude Code’s capabilities—it’s very good. That said, it’s still a command line application that runs in the terminal, which is not everyone’s idea of a good time. Seeing this, Anthropic in January released a friendlier version called Claude Cowork.

Not to be outdone, OpenAI just shipped the latest version of Codex, their Claude Code competitor. Meanwhile in the gnarlier corners of the AI internet, adventurous agent users have been testing out OpenClaw (f/k/a Moltbot, f/k/a Clawdbot).2

These agents have all more or less settled into a general design:

They use a powerful LLM (e.g. Opus 4.5, GPT 5.2) which has been trained to follow instructions and reason about next steps while also having a reasonably large memory to keep track of things during long-running tasks

They can write code and access things on your computer (more or less securely)

They can use a web browser (more or less securely)

They know how to connect to external systems using APIs or MCP

They can be told how to do specific tasks in specific ways via Skills

Slowly but surely, these capabilities have added up to something resembling an actual colleague that can get real work done. It seems likely that the future of GTM orchestration will look like a lot of other knowledge work: done by agents with some (for now) human guidance.

If that AI vision comes to fruition, there’s very little reason for the low-code workflow tools to continue to exist except in niche cases where entirely deterministic processes are necessary. Even then, it’s unclear that those workflows couldn’t just be vibe coded—replaced by deterministic code written, deployed and monitored by agents.

Wrapping up

If I had to bet on a winner in the GTM workflow layer, I’d bet on the AI agents. They can produce code for the fully deterministic tasks (e.g. update this field if that thing is true) and also learn to properly handle the many semi-deterministic tasks that happen in GTM.

For example, there’s some skill in determining the next step in a sales process based on the totality of information that’s known about the prospect’s situation and the conversations so far. It’s semi-deterministic—there are good choices and bad choices but there’s still a wide range of action that can be taken within the set of good choices. AI agents can do this. Other orchestration models can’t.

So far in this series we’ve looked at the emergence of a composable GTM tech stack as a threat (or perhaps a response) to CRM-centrism and the orchestration layer that might actually enable the composition. In the next piece, we’ll look at the role of the rest of the GTM tech stack and how GTM tech vendors need evolve to participate in—and benefit from—this composable ecosystem.

And lucrative.

If you want to go to truly weird (and very developer-y) corners of the agent revolution, visit Gas Town which may be as much art project as agentic coding environment.